Many companies are seeking creative ways to extract valuable business insights from data. Historically, data architectures have been purpose-built with tight coupling between components that limit extensibility and scalability through reliance on batch processing. An event-driven architecture changes this paradigm by separating technical components so they can operate independently. This approach provides insights quickly and accurately, while also offering new ways to engage users and simplify complex modernization efforts.

The core of any event-driven architecture is a software system design pattern that processes and reacts to events in real time. Events are triggered or detected through a wide range of inputs – user interactions, sensor readings, modifications to data values, or even integration of third-party data sources, which are then sent to independent services for processing. These architectures can be implemented in several different ways, but the most common is event sourcing, in which each state change is represented as an event and can be reprocessed sequentially to re-create the current state of the system.

Event-driven data architectures extend this concept where the events are driven by capturing database changes in near real time. These changes are used to define and trigger the responding events and actions for those events. This allows for a streamlined, non-intrusive, and real-time capability that can adapt to new data and changing conditions. One key technical aspect of an event-driven data architecture is the use of a streaming platform such as Apache Kafka or Amazon Kinesis in place of a relational data store. These platforms allow for the real-time processing of large volumes of data and can be used to trigger data consumption events and actions based on the data available in the stream.

A successful pattern for implementing an event-driven data architecture is using log-based change data capture (CDC) tools for monitoring and publishing changes from a relational database system to a data stream. CDC captures source database activity in real time and publishes data changes to other data consumers. Unlike polling and trigger-based methods, the data is published so there is no performance impact on the underlying source system.

When changes are pushed to a stream, consumer functions can act on changing data in real time without the need for a direct connection to the source data platform. This simplifies consumption and decouples data consumers from data producers. Additionally, multiple data consumers can read changes from a single stream, eliminating point-to-point data delivery and allowing consumption at different intervals based on the business need. Event-driven architectures can also be used to:

- Improve search capabilities for end users by streaming data from an operational system to a search index and reducing operational workloads, while also improving search performance and usability of the data.

- Bolster data governance by publishing high-quality data from systems of record in real time, allowing well-managed, golden-record data to be easily maintained and published for consumption by downstream systems.

- Publish notifications to downstream applications that need to act based on a change in an operational system and can be initiated in near real time. Alternatively, consumers can listen to the stream and act upon events when they are published.

- Deliver real-time updates to the data warehouse. Rather than wait for a nightly batch feed, changes can be applied to the warehouse as they happen, improving the timeliness of analytical reporting.

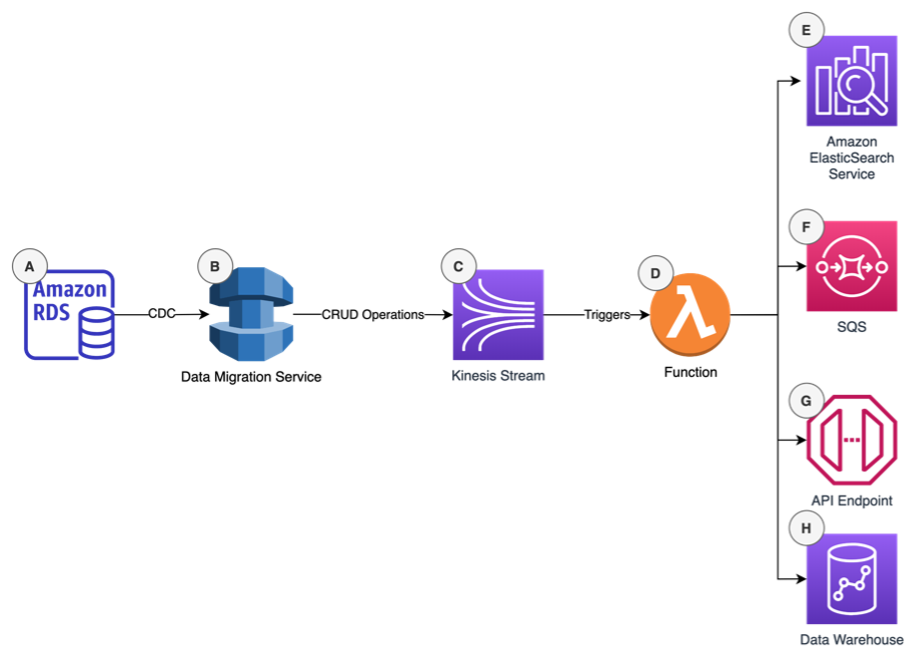

Below is an example of an event-based data architecture that uses the following Amazon Web Services serverless components to react to changes in the source data:

- Relational Data Store (RDS) – Operational system based on a relational database.

- Data Migration Service (DMS) – Uses CDC to read database logs and publish, create, read, update, and delete (CRUD) changes to a data stream.

- Kinesis Stream – Data streaming platform that consumes CDC data from DMS and makes it available for consumption.

- Lambda – Serverless functions that are used for data quality validation, transformation, and preparation before delivery to the data consumer. Functions can also be used for micro-batching data to reduce the load on target platforms.

- ElasticSearch Service – Target system used for search workload.

- SQS – Messaging platform used for tracking data quality issues detected in the pipeline and facilitating polling-based guaranteed data delivery.

- API Endpoint –Downstream APIs can be called to integrate data with other systems using push notifications.

- Data Warehouse – Target data warehousing platform used for analytics with Redshift.

As companies modernize systems, the way they process and interact with data changes. Event-driven data architectures allow for source database changes to be captured and propagated to target systems as they happen; enabling downstream systems to react to data without waiting for nightly batch feeds. When planning for architecture modernization, consider an event-driven data architecture that decouples the source and target systems and allows for an incremental migration approach. As they modernize, companies will use event based systems to get data into the hands of the business faster. The winners will have more timely data available that will improve an organization’s data driven decision-making ability and set them apart from their peers.